AppPulse – Feedback Intelligence Prototype

Type: Private prototype · Concept exploration (non‑commercial)

My role: Product designer & prompt engineer – problem definition, workflows,

and AI‑assisted implementation

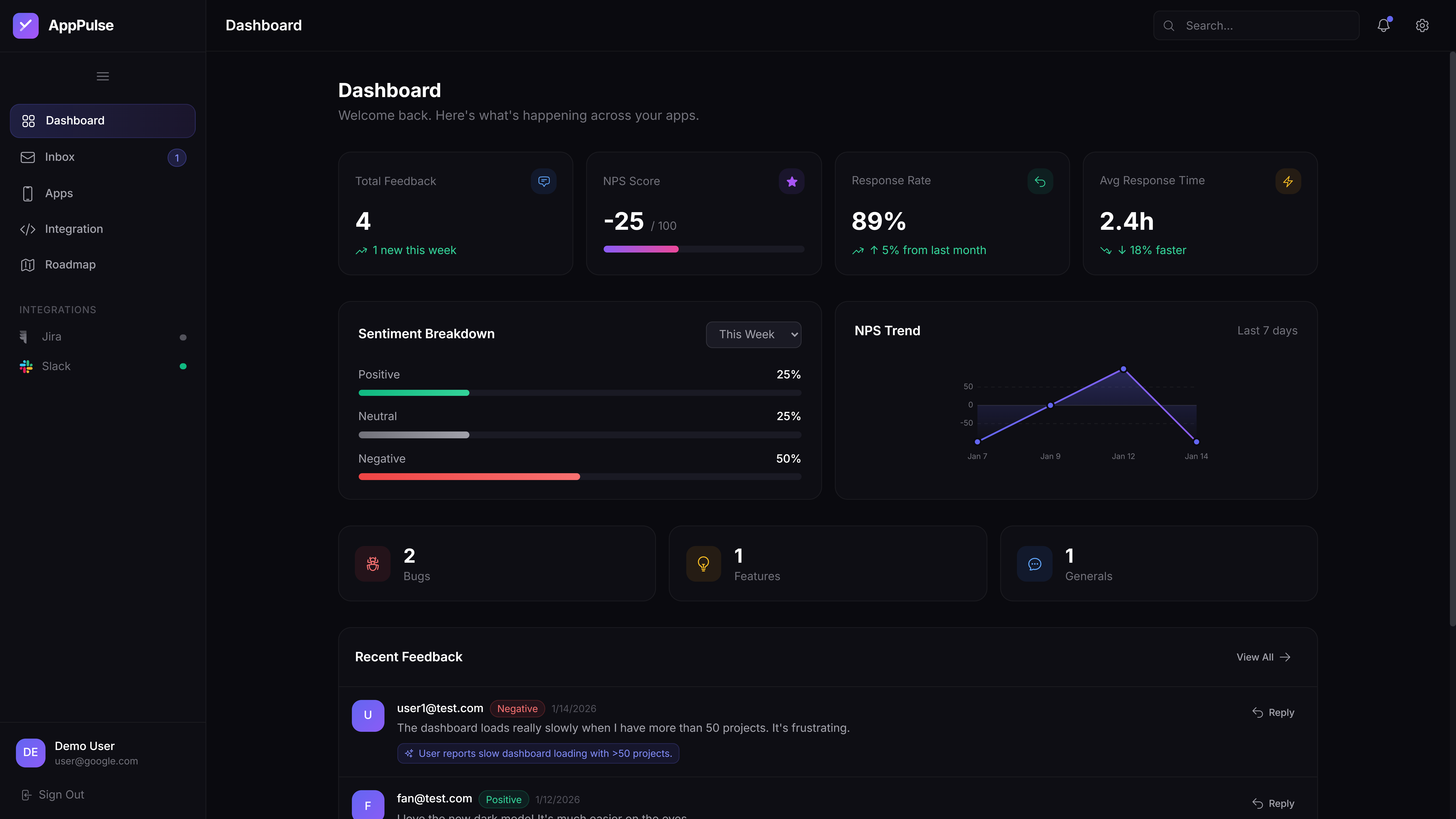

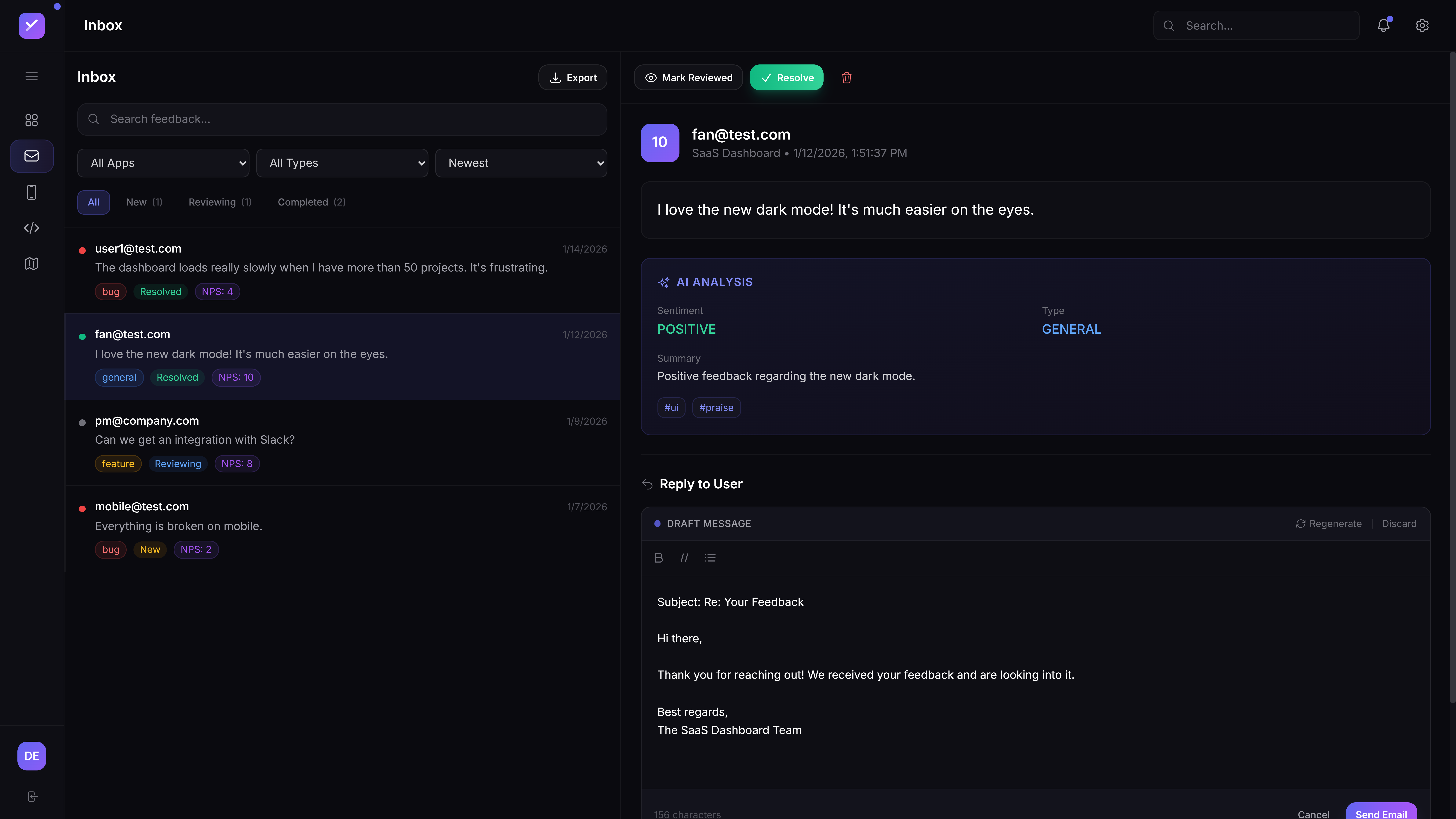

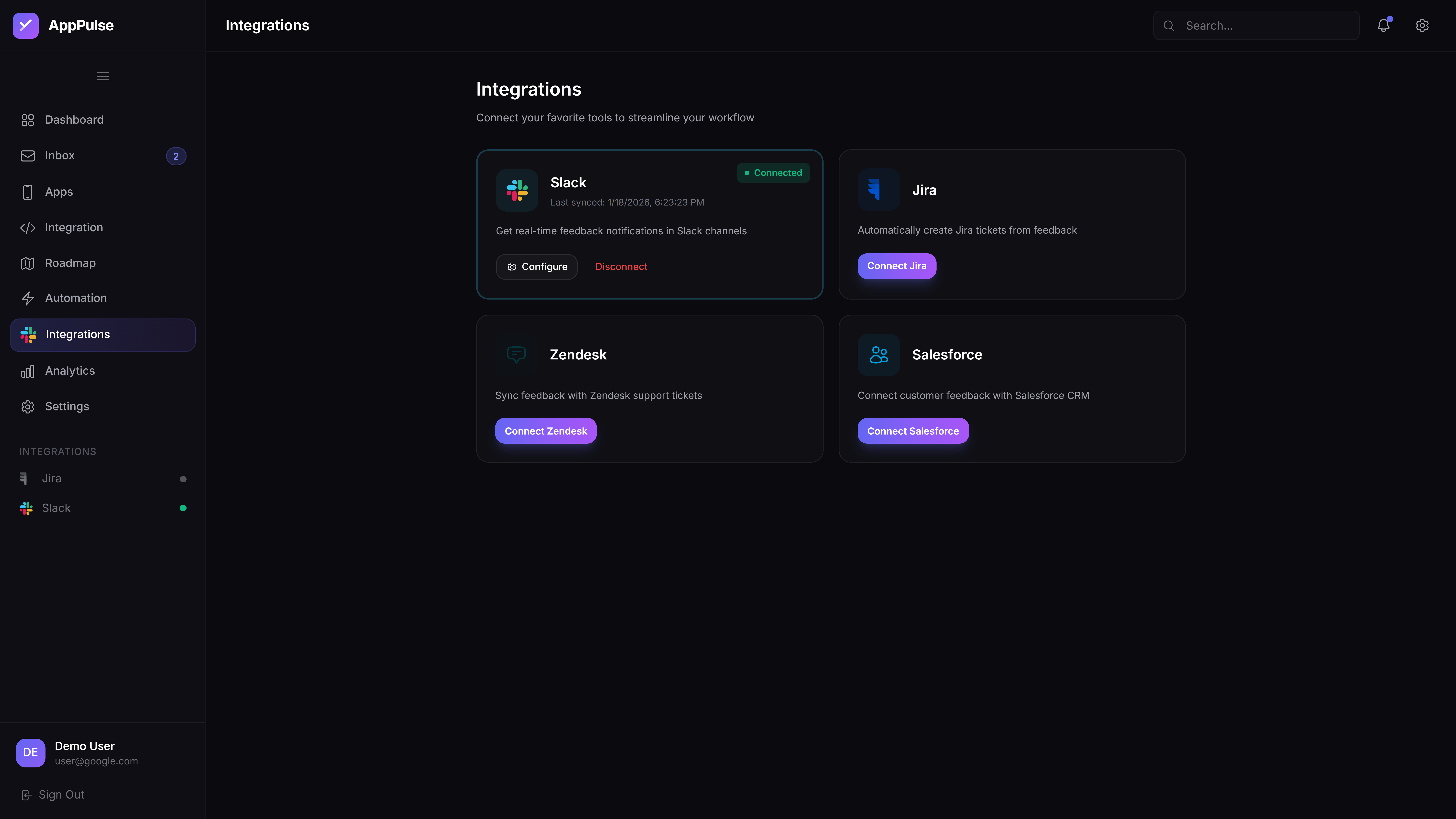

AppPulse started as a way to solve my own problem while building HiLi Notes: I was collecting feedback in spreadsheets and it took weeks to turn that into clear product decisions. I used this project to explore how far I could go using AI tools to design and build a working prototype for feedback analysis. It is not a public SaaS and has no paying customers.